See every risk on every frame.

Miss nothing worth acting on.

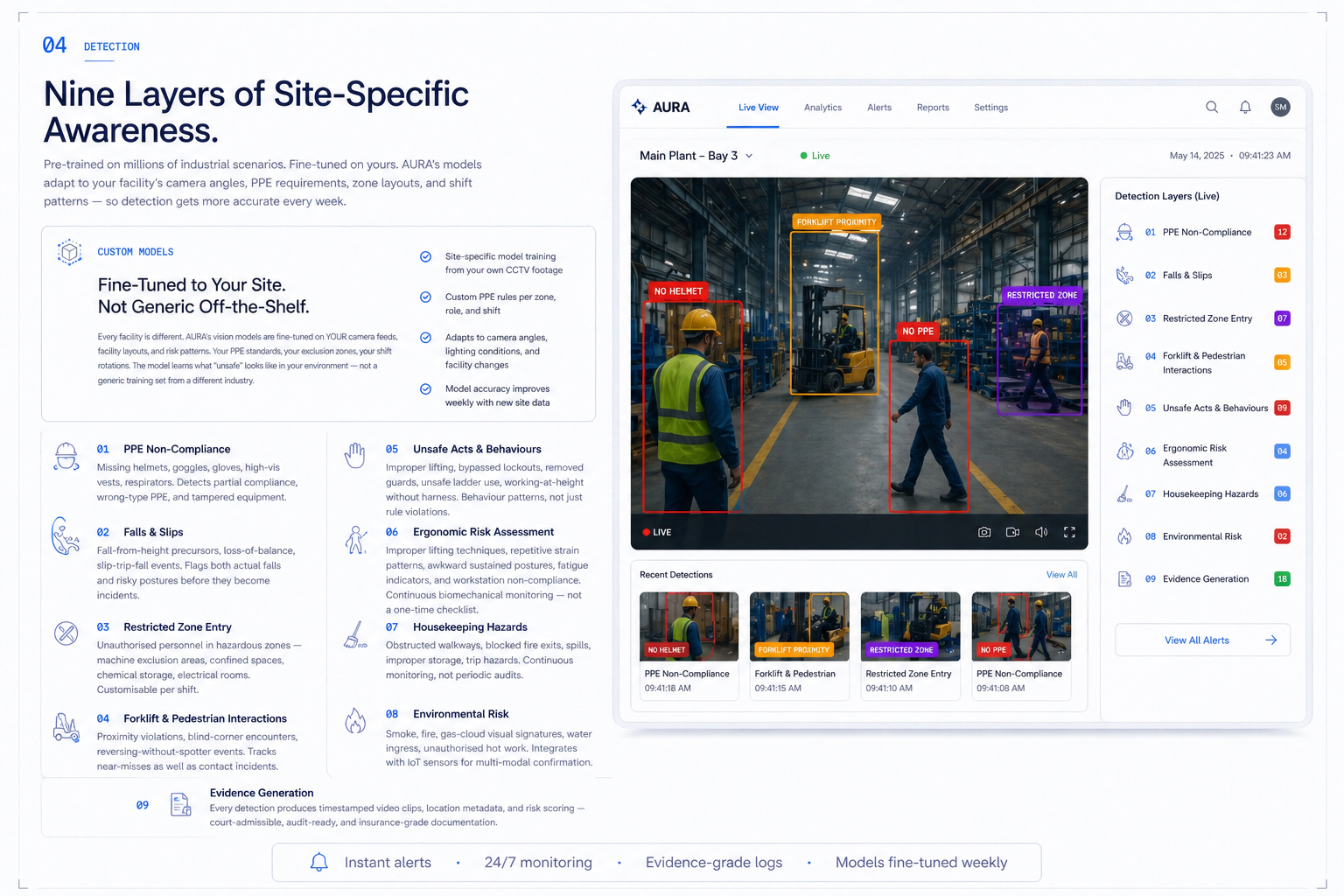

AURA, the Adaptive Safety Engine inside the SAFVR Safety Intelligence Platform, watches every camera continuously across nine detection categories — fine-tuned to your site, your PPE rules, and your zones. Each detection produces evidence, context, and a next action.

01 / Definition

What is AI hazard detection?

AI hazard detection uses computer vision safety models to identify unsafe acts, PPE non-compliance, restricted-zone entry, equipment proximity, and near-miss patterns from camera feeds. In SAFVR, each detection creates evidence, context, and a next action so safety teams can respond before risk becomes an incident.

Your cameras already see everything.

Your teams catch almost nothing.

Pain today

- Hundreds of cameras, no intelligence connecting them.

- Manual review only ever happens after the incident.

- Generic motion alerts bury the alerts that actually matter.

- Near-miss precursors stay invisible — patterns repeat.

What AURA does instead

- AURA composites every feed into one continuous detection layer.

- Each event becomes evidence: clip, zone, timestamp, next action.

- Site-specific rules silence noise and surface the alerts worth acting on.

- Repeat precursors are scored across shifts so prevention can begin.

Nine detection categories.

One continuous intelligence layer.

PPE compliance

Helmets, vests, gloves, respirators — per zone, per shift.

Falls & slips

Fall-from-height precursors and slip events captured live.

Confined space

Permit-aware monitoring of entries and presence over time.

Vehicle proximity

Forklift, GSE, and pedestrian interaction risk in real time.

Restricted zones

Virtual perimeters with permit and shift cross-reference.

Spill & housekeeping

Blocked exits, leaks, clutter — flagged with evidence.

Smoke & fire

Early environmental signals routed to the responsible team.

Posture & ergo

Awkward posture, repetition, and lifting strain over shifts.

Crowding & density

Occupancy and density thresholds across zones and gates.

04 / Live frame

Annotation, status, and audit trail — composited from the same camera feed.

Every frame AURA processes carries its own evidence. Bounding boxes, status chips, and zone rules are recorded alongside the clip — so review, escalation, and prevention all run from the same source of truth.

<2s

Median detection latency

Source: Internal benchmark

9

Detection categories

Source: Platform capability

100%

Runs on existing cameras

Source: Deployment design

Designed

To support leading-indicator workflows

Source: In progress

Common questions about AI hazard detection.

- What is AI hazard detection?

- AI hazard detection uses computer vision models to identify unsafe acts, PPE non-compliance, restricted-zone entry, vehicle proximity, and near-miss patterns from camera feeds in real time. SAFVR's Adaptive Safety Engine (AURA) detects across nine industrial categories and produces evidence, context, and a next action for every event.

- Does AURA work with our existing CCTV cameras?

- Yes. AURA is designed to run on your existing IP cameras — no new hardware is required. It is camera-agnostic and integrates with most NVR and VMS systems used in industrial sites.

- Which hazards can AURA detect?

- Nine categories on day one: PPE compliance, falls and slips, confined-space entry, vehicle and pedestrian proximity, restricted zones, spills and housekeeping, smoke and fire, posture and ergonomics, and crowding/density. Detection rules are fine-tuned to your zones, shifts, and PPE standards.

- How accurate is AI PPE detection on a real plant floor?

- Accuracy depends on camera placement, lighting, and the specificity of your PPE rules. Models are fine-tuned per site during the 30-day pilot, and pilot accuracy is reported with frame-level evidence so EHS teams can validate before rollout.

- How long does the 30-day detection pilot take to go live?

- Most pilots begin processing live camera feeds within five to seven business days. That window covers camera integration, zone mapping, PPE rule configuration, and initial model calibration. The remaining pilot period is used to fine-tune detection thresholds and report accuracy with frame-level evidence.

- Does detection work in low-light or outdoor environments?

- AURA is designed to support detection across a range of lighting conditions, including low-light indoor areas and variable outdoor environments. Model performance is calibrated per site during the pilot using your actual camera feeds, and accuracy under specific conditions is reported with evidence so your team can evaluate coverage before rollout.

READY TO SEE IT ON YOUR CAMERAS?

Point AURA at one camera.

Watch it detect what walkthroughs miss.

30 days. Your existing CCTV. Nine hazard categories live on day one — PPE, fall risk, vehicle proximity, restricted zones, and more. You see real detections from your floor before you commit to anything beyond the pilot.

No new hardware. Existing IP cameras work. Setup in days.